Captioning Business Videos at Scale

Most teams know captions matter. The real problem is getting them done consistently across dozens of videos a month without creating a bottleneck. Here is what actually works.

Every team producing video at volume hits the same wall with captions. You know they matter. Your legal team knows they matter. Your social media manager definitely knows they matter. But adding captions to every single video, every time, across every format? That is where things break down.

We have produced over 70,000 videos for enterprise teams across four continents. Captions come up in almost every conversation we have with clients about scaling their video programs. Not because anyone questions their value, but because the process of actually getting them done consistently is harder than it should be.

This is what we have learned about making captions work at scale without slowing everything else down.

Why do captions keep falling through the cracks?

Captions are rarely the first thing a team thinks about when planning a video. They tend to get added at the end, after editing is done, as a final step before publishing. That makes them the easiest thing to skip when deadlines get tight.

In a typical enterprise video workflow, adding captions involves a separate request to production, a turnaround window, a review cycle, and then re-uploading the final file. For a single video, that is manageable. For a team shipping 15-30 videos a month, it creates a real bottleneck.

The teams we work with that handle captions well have one thing in common: they build captioning into the production process rather than treating it as an add-on. Captions are part of the brief, part of the review, and part of the delivery. Not an afterthought.

What is the business case for captioning every video?

The engagement numbers are hard to ignore. Studies consistently show that around 80% of video on social platforms is watched with the sound off. For a full breakdown of the legal and engagement case, see our guide on video accessibility at scale. On LinkedIn, where most B2B video gets distributed, silent autoplay is the default experience. A video without captions is a video most people scroll past.

But engagement is only part of the picture. There are three other reasons enterprise teams should be captioning everything.

Accessibility compliance

WCAG 2.1 Level AA requires captions on all prerecorded video content. For organizations in regulated industries, or those doing business with government agencies, this is not optional. It is a legal requirement. The number of accessibility-related lawsuits has been climbing year over year, and video content is increasingly in scope.

Global teams with language differences

If your company operates across multiple countries, captions help non-native English speakers follow along. This is especially relevant for onboarding videos, compliance training, and internal communications where comprehension actually matters.

SEO and discoverability

Search engines cannot watch your video. They can read your captions. Captioned video content gets indexed more effectively, which means your marketing videos and thought leadership content show up in search results more often.

What are the different approaches to video captioning?

There are basically three ways teams handle captions. Each comes with tradeoffs.

Manual transcription

Someone watches the video and types out every word. This produces the most accurate results, especially for industry-specific terminology, proper nouns, and acronyms. The downside is speed. Manual transcription typically takes 4-6x the video length to complete, and it does not scale well when you are producing video in volume.

Auto-generated captions

Speech recognition technology generates captions automatically. This is fast and inexpensive. The accuracy has improved significantly in the last two years, but it still struggles with accents, technical jargon, and multiple speakers. Auto-generated captions almost always need a human review pass before publishing.

Hybrid approach

Auto-generate first, then review and edit. This is what most high-volume teams land on. You get the speed of automation with the accuracy of human review. The key is making that review step fast and easy enough that people actually do it instead of skipping it.

How do you maintain caption quality across dozens of videos?

Quality is where most caption workflows fall apart at scale. The first few videos get carefully reviewed. By video 20, someone is copy-pasting auto-generated text and hoping for the best.

Three things help:

Create a caption style guide. Decide upfront how you handle numbers (written out or digits?), acronyms (spelled out on first use?), speaker identification, and line breaks. This saves time during review because editors are not making the same decisions over and over.

Set a maximum line length. Two lines of text, around 42 characters per line, is the standard for readability. Longer lines get cut off on mobile screens. If your captions regularly exceed this, viewers on phones are missing words.

Review timing, not just text. Captions that appear a half-second late, or that stay on screen too long, feel off even when the words are correct. Good caption timing matches the natural rhythm of speech. This is something auto-generation often gets wrong, especially during pauses or when speakers overlap.

What about open captions vs. closed captions?

Open captions are burned into the video file. They are always visible and cannot be turned off. Closed captions are a separate track that viewers can toggle on or off.

For social media, open captions are usually the better choice. Most platforms support closed captions, but they display them in the platform's default style, which often clashes with your brand. Open captions let you control the font, size, color, and positioning. They also show up in video previews and thumbnails, which helps with scroll-stopping.

For internal communications and training content hosted on an LMS or intranet, closed captions give employees the choice. Some people prefer watching without captions when they have headphones on. Closed captions also support multiple languages more cleanly since you can offer separate caption tracks.

Many teams use both. Open captions for social distribution, closed captions for internal hosting. The extra effort is worth it when the same video serves multiple channels, which is increasingly common in video-first organizations.

How do you handle captions for different video types?

Not all videos need the same caption treatment.

Talking head and interview videos are straightforward. One or two speakers, clear audio, minimal background noise. Auto-generation handles these well with light editing.

Event recordings and panel discussions are harder. Multiple speakers, audience questions, cross-talk. These need speaker identification in the captions and more careful timing. If you are repurposing event video for LinkedIn, budget extra time for caption quality here.

Animated and motion graphics videos with voiceover are usually clean audio, so auto-generation works well. The challenge is syncing caption timing with visual transitions. You want captions to appear and disappear in rhythm with the animation, not against it.

Training and instructional videos have the highest accuracy bar. If a caption says "administer 5ml" when the speaker said "15ml," that is not just a typo. For training video production, treat caption review as part of the content review process, not a separate step.

What tools and workflows actually scale?

The teams that caption consistently at volume have one thing in common: captioning is built into their production platform, not bolted on as a separate tool.

When captions require exporting video to a third-party tool, uploading it, generating captions, downloading an SRT file, re-syncing it, and re-uploading the final version, people skip it. Not because they do not care, but because the friction is too high when you are shipping video on a deadline.

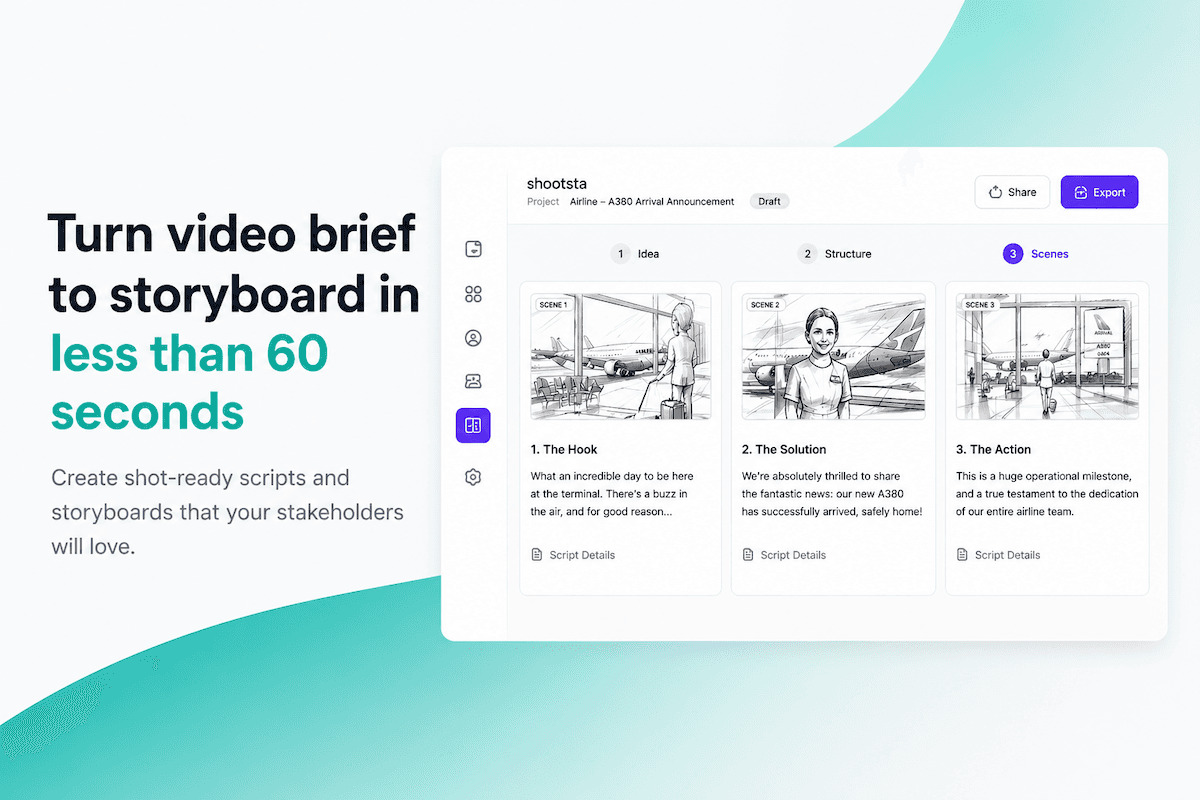

The best workflow looks like this: finish your edit, generate captions, review them in the same place, and publish. One platform, one pass. Here is how that works in Shootsta. The fewer handoffs involved, the more likely captions actually happen on every video.

If you are evaluating video production platforms, ask specifically about their captioning workflow. How many steps does it take? Can you edit captions inside the platform? Do you need to export and re-import? These details determine whether your team will actually caption everything or just the videos that have time left in the schedule.

Frequently asked questions about video captions

Do captions really improve video performance?

Yes. Multiple studies show captioned videos see 12-15% higher watch time compared to uncaptioned versions. On social platforms where autoplay is muted by default, captions are often the only thing communicating your message in the first few seconds.

Are auto-generated captions accurate enough to publish without editing?

For general conversational content with clear audio, modern speech recognition hits around 95% accuracy. That sounds high, but 5% errors across a 3-minute video means roughly 7-8 wrong words. For brand content, training materials, or anything with industry terminology, you should always review before publishing.

What caption file format should I use?

SRT is the most widely supported format and works on YouTube, LinkedIn, Vimeo, and most LMS platforms. VTT offers more styling options and is the standard for web-based video players. If you are only using one format, SRT is the safe default.

How long does it take to caption a 3-minute video?

With auto-generation and a review pass, about 5-10 minutes. Manual transcription takes 12-18 minutes for the same video. At scale, the difference between these two approaches is significant. A team producing 30 videos a month saves roughly 6-8 hours per month by using auto-generation with review instead of manual transcription.

Do I need captions in multiple languages?

If your audience spans multiple language groups, yes. Start with your primary language and add translations for your largest audience segments. For global companies, English captions plus one or two regional languages covers most use cases. Machine translation of caption files has improved significantly, but still needs human review for business content.

Related reading

- Captions are one slice of a bigger discipline. Read about using typography well in business video.